Upload 71 files

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +2 -0

- .gitignore +162 -0

- Dockerfile +9 -0

- INSTALL.md +128 -0

- README.md +91 -0

- assets/cat.gif +0 -0

- assets/custom/face1.png +0 -0

- assets/custom/face2.png +0 -0

- assets/demo.png +0 -0

- assets/horse.gif +3 -0

- assets/mouse.gif +0 -0

- assets/nose.gif +3 -0

- assets/paper.png +0 -0

- colab.ipynb +76 -0

- draggan/__init__.py +3 -0

- draggan/deprecated/__init__.py +3 -0

- draggan/deprecated/api.py +244 -0

- draggan/deprecated/stylegan2/__init__.py +0 -0

- draggan/deprecated/stylegan2/inversion.py +209 -0

- draggan/deprecated/stylegan2/lpips/__init__.py +5 -0

- draggan/deprecated/stylegan2/lpips/base_model.py +58 -0

- draggan/deprecated/stylegan2/lpips/dist_model.py +314 -0

- draggan/deprecated/stylegan2/lpips/networks_basic.py +188 -0

- draggan/deprecated/stylegan2/lpips/pretrained_networks.py +181 -0

- draggan/deprecated/stylegan2/lpips/util.py +160 -0

- draggan/deprecated/stylegan2/model.py +713 -0

- draggan/deprecated/stylegan2/op/__init__.py +2 -0

- draggan/deprecated/stylegan2/op/conv2d_gradfix.py +229 -0

- draggan/deprecated/stylegan2/op/fused_act.py +157 -0

- draggan/deprecated/stylegan2/op/fused_bias_act.cpp +32 -0

- draggan/deprecated/stylegan2/op/fused_bias_act_kernel.cu +105 -0

- draggan/deprecated/stylegan2/op/upfirdn2d.cpp +31 -0

- draggan/deprecated/stylegan2/op/upfirdn2d.py +232 -0

- draggan/deprecated/stylegan2/op/upfirdn2d_kernel.cu +369 -0

- draggan/deprecated/utils.py +216 -0

- draggan/deprecated/web.py +319 -0

- draggan/draggan.py +355 -0

- draggan/stylegan2/LICENSE.txt +97 -0

- draggan/stylegan2/__init__.py +0 -0

- draggan/stylegan2/dnnlib/__init__.py +9 -0

- draggan/stylegan2/dnnlib/util.py +477 -0

- draggan/stylegan2/legacy.py +320 -0

- draggan/stylegan2/torch_utils/__init__.py +9 -0

- draggan/stylegan2/torch_utils/custom_ops.py +126 -0

- draggan/stylegan2/torch_utils/misc.py +262 -0

- draggan/stylegan2/torch_utils/ops/__init__.py +9 -0

- draggan/stylegan2/torch_utils/ops/bias_act.cpp +99 -0

- draggan/stylegan2/torch_utils/ops/bias_act.cu +173 -0

- draggan/stylegan2/torch_utils/ops/bias_act.h +38 -0

- draggan/stylegan2/torch_utils/ops/bias_act.py +212 -0

.gitattributes

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

assets/horse.gif filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

assets/nose.gif filter=lfs diff=lfs merge=lfs -text

|

.gitignore

ADDED

|

@@ -0,0 +1,162 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Byte-compiled / optimized / DLL files

|

| 2 |

+

__pycache__/

|

| 3 |

+

*.py[cod]

|

| 4 |

+

*$py.class

|

| 5 |

+

|

| 6 |

+

# C extensions

|

| 7 |

+

*.so

|

| 8 |

+

|

| 9 |

+

# Distribution / packaging

|

| 10 |

+

.Python

|

| 11 |

+

build/

|

| 12 |

+

develop-eggs/

|

| 13 |

+

dist/

|

| 14 |

+

downloads/

|

| 15 |

+

eggs/

|

| 16 |

+

.eggs/

|

| 17 |

+

lib/

|

| 18 |

+

lib64/

|

| 19 |

+

parts/

|

| 20 |

+

sdist/

|

| 21 |

+

var/

|

| 22 |

+

wheels/

|

| 23 |

+

share/python-wheels/

|

| 24 |

+

*.egg-info/

|

| 25 |

+

.installed.cfg

|

| 26 |

+

*.egg

|

| 27 |

+

MANIFEST

|

| 28 |

+

|

| 29 |

+

# PyInstaller

|

| 30 |

+

# Usually these files are written by a python script from a template

|

| 31 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 32 |

+

*.manifest

|

| 33 |

+

*.spec

|

| 34 |

+

|

| 35 |

+

# Installer logs

|

| 36 |

+

pip-log.txt

|

| 37 |

+

pip-delete-this-directory.txt

|

| 38 |

+

|

| 39 |

+

# Unit test / coverage reports

|

| 40 |

+

htmlcov/

|

| 41 |

+

.tox/

|

| 42 |

+

.nox/

|

| 43 |

+

.coverage

|

| 44 |

+

.coverage.*

|

| 45 |

+

.cache

|

| 46 |

+

nosetests.xml

|

| 47 |

+

coverage.xml

|

| 48 |

+

*.cover

|

| 49 |

+

*.py,cover

|

| 50 |

+

.hypothesis/

|

| 51 |

+

.pytest_cache/

|

| 52 |

+

cover/

|

| 53 |

+

|

| 54 |

+

# Translations

|

| 55 |

+

*.mo

|

| 56 |

+

*.pot

|

| 57 |

+

|

| 58 |

+

# Django stuff:

|

| 59 |

+

*.log

|

| 60 |

+

local_settings.py

|

| 61 |

+

db.sqlite3

|

| 62 |

+

db.sqlite3-journal

|

| 63 |

+

|

| 64 |

+

# Flask stuff:

|

| 65 |

+

instance/

|

| 66 |

+

.webassets-cache

|

| 67 |

+

|

| 68 |

+

# Scrapy stuff:

|

| 69 |

+

.scrapy

|

| 70 |

+

|

| 71 |

+

# Sphinx documentation

|

| 72 |

+

docs/_build/

|

| 73 |

+

|

| 74 |

+

# PyBuilder

|

| 75 |

+

.pybuilder/

|

| 76 |

+

target/

|

| 77 |

+

|

| 78 |

+

# Jupyter Notebook

|

| 79 |

+

.ipynb_checkpoints

|

| 80 |

+

|

| 81 |

+

# IPython

|

| 82 |

+

profile_default/

|

| 83 |

+

ipython_config.py

|

| 84 |

+

|

| 85 |

+

# pyenv

|

| 86 |

+

# For a library or package, you might want to ignore these files since the code is

|

| 87 |

+

# intended to run in multiple environments; otherwise, check them in:

|

| 88 |

+

# .python-version

|

| 89 |

+

|

| 90 |

+

# pipenv

|

| 91 |

+

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

|

| 92 |

+

# However, in case of collaboration, if having platform-specific dependencies or dependencies

|

| 93 |

+

# having no cross-platform support, pipenv may install dependencies that don't work, or not

|

| 94 |

+

# install all needed dependencies.

|

| 95 |

+

#Pipfile.lock

|

| 96 |

+

|

| 97 |

+

# poetry

|

| 98 |

+

# Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

|

| 99 |

+

# This is especially recommended for binary packages to ensure reproducibility, and is more

|

| 100 |

+

# commonly ignored for libraries.

|

| 101 |

+

# https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

|

| 102 |

+

#poetry.lock

|

| 103 |

+

|

| 104 |

+

# pdm

|

| 105 |

+

# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

|

| 106 |

+

#pdm.lock

|

| 107 |

+

# pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

|

| 108 |

+

# in version control.

|

| 109 |

+

# https://pdm.fming.dev/#use-with-ide

|

| 110 |

+

.pdm.toml

|

| 111 |

+

|

| 112 |

+

# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

|

| 113 |

+

__pypackages__/

|

| 114 |

+

|

| 115 |

+

# Celery stuff

|

| 116 |

+

celerybeat-schedule

|

| 117 |

+

celerybeat.pid

|

| 118 |

+

|

| 119 |

+

# SageMath parsed files

|

| 120 |

+

*.sage.py

|

| 121 |

+

|

| 122 |

+

# Environments

|

| 123 |

+

.env

|

| 124 |

+

.venv

|

| 125 |

+

env/

|

| 126 |

+

venv/

|

| 127 |

+

ENV/

|

| 128 |

+

env.bak/

|

| 129 |

+

venv.bak/

|

| 130 |

+

|

| 131 |

+

# Spyder project settings

|

| 132 |

+

.spyderproject

|

| 133 |

+

.spyproject

|

| 134 |

+

|

| 135 |

+

# Rope project settings

|

| 136 |

+

.ropeproject

|

| 137 |

+

|

| 138 |

+

# mkdocs documentation

|

| 139 |

+

/site

|

| 140 |

+

|

| 141 |

+

# mypy

|

| 142 |

+

.mypy_cache/

|

| 143 |

+

.dmypy.json

|

| 144 |

+

dmypy.json

|

| 145 |

+

|

| 146 |

+

# Pyre type checker

|

| 147 |

+

.pyre/

|

| 148 |

+

|

| 149 |

+

# pytype static type analyzer

|

| 150 |

+

.pytype/

|

| 151 |

+

|

| 152 |

+

# Cython debug symbols

|

| 153 |

+

cython_debug/

|

| 154 |

+

|

| 155 |

+

# PyCharm

|

| 156 |

+

# JetBrains specific template is maintained in a separate JetBrains.gitignore that can

|

| 157 |

+

# be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

|

| 158 |

+

# and can be added to the global gitignore or merged into this file. For a more nuclear

|

| 159 |

+

# option (not recommended) you can uncomment the following to ignore the entire idea folder.

|

| 160 |

+

.idea/

|

| 161 |

+

checkpoints/

|

| 162 |

+

tmp/

|

Dockerfile

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

FROM python:3.7

|

| 2 |

+

|

| 3 |

+

WORKDIR /app

|

| 4 |

+

COPY . .

|

| 5 |

+

EXPOSE 7860

|

| 6 |

+

|

| 7 |

+

RUN pip install --no-cache-dir -r requirements.txt

|

| 8 |

+

|

| 9 |

+

ENTRYPOINT [ "python", "-m", "draggan.web", "--ip", "0.0.0.0"]

|

INSTALL.md

ADDED

|

@@ -0,0 +1,128 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Installation

|

| 2 |

+

|

| 3 |

+

- [System Requirements](#system-requirements)

|

| 4 |

+

- [Install with PyPI](#install-with-pypi)

|

| 5 |

+

- [Install Manually](#install-manually)

|

| 6 |

+

- [Install with Docker](#install-with-docker)

|

| 7 |

+

|

| 8 |

+

## System requirements

|

| 9 |

+

|

| 10 |

+

- This implementation support running on CPU, Nvidia GPU, and Apple's m1/m2 chips.

|

| 11 |

+

- When using with GPU, 8 GB memory is required for 1024 models. 6 GB is recommended for 512 models.

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

## Install with PyPI

|

| 15 |

+

|

| 16 |

+

📑 [Step by Step Tutorial](https://zeqiang-lai.github.io/blog/en/posts/drag_gan/) | [中文部署教程](https://zeqiang-lai.github.io/blog/posts/ai/drag_gan/)

|

| 17 |

+

|

| 18 |

+

We recommend to use Conda to install requirements.

|

| 19 |

+

|

| 20 |

+

```bash

|

| 21 |

+

conda create -n draggan python=3.7

|

| 22 |

+

conda activate draggan

|

| 23 |

+

```

|

| 24 |

+

|

| 25 |

+

Install PyTorch following the [official instructions](https://pytorch.org/get-started/locally/)

|

| 26 |

+

```bash

|

| 27 |

+

conda install pytorch torchvision torchaudio pytorch-cuda=11.7 -c pytorch -c nvidia

|

| 28 |

+

```

|

| 29 |

+

|

| 30 |

+

Install DragGAN

|

| 31 |

+

```bash

|

| 32 |

+

pip install draggan

|

| 33 |

+

# If you meet ERROR: Could not find a version that satisfies the requirement draggan (from versions: none), use

|

| 34 |

+

pip install draggan -i https://pypi.org/simple/

|

| 35 |

+

```

|

| 36 |

+

|

| 37 |

+

Launch the Gradio demo

|

| 38 |

+

|

| 39 |

+

```bash

|

| 40 |

+

# if you have a Nvidia GPU

|

| 41 |

+

python -m draggan.web

|

| 42 |

+

# if you use m1/m2 mac

|

| 43 |

+

python -m draggan.web --device mps

|

| 44 |

+

# otherwise

|

| 45 |

+

python -m draggan.web --device cpu

|

| 46 |

+

```

|

| 47 |

+

|

| 48 |

+

## Install Manually

|

| 49 |

+

|

| 50 |

+

Ensure you have a GPU and CUDA installed. We use Python 3.7 for testing, other versions (>= 3.7) of Python should work too, but not tested. We recommend to use [Conda](https://conda.io/projects/conda/en/stable/user-guide/install/download.html) to prepare all the requirements.

|

| 51 |

+

|

| 52 |

+

For Windows users, you might encounter some issues caused by StyleGAN custom ops, youd could find some solutions from the [issues pannel](https://github.com/Zeqiang-Lai/DragGAN/issues). We are also working on a more friendly package without setup.

|

| 53 |

+

|

| 54 |

+

```bash

|

| 55 |

+

git clone https://github.com/Zeqiang-Lai/DragGAN.git

|

| 56 |

+

cd DragGAN

|

| 57 |

+

conda create -n draggan python=3.7

|

| 58 |

+

conda activate draggan

|

| 59 |

+

pip install -r requirements.txt

|

| 60 |

+

```

|

| 61 |

+

|

| 62 |

+

Launch the Gradio demo

|

| 63 |

+

|

| 64 |

+

```bash

|

| 65 |

+

# if you have a Nvidia GPU

|

| 66 |

+

python gradio_app.py

|

| 67 |

+

# if you use m1/m2 mac

|

| 68 |

+

python gradio_app.py --device mps

|

| 69 |

+

# otherwise

|

| 70 |

+

python gradio_app.py --device cpu

|

| 71 |

+

```

|

| 72 |

+

|

| 73 |

+

> If you have any issue for downloading the checkpoint, you could manually download it from [here](https://huggingface.co/aaronb/StyleGAN2/tree/main) and put it into the folder `checkpoints`.

|

| 74 |

+

|

| 75 |

+

## Install with Docker

|

| 76 |

+

|

| 77 |

+

Follow these steps to run DragGAN using Docker:

|

| 78 |

+

|

| 79 |

+

### Prerequisites

|

| 80 |

+

|

| 81 |

+

1. Install Docker on your system from the [official Docker website](https://www.docker.com/).

|

| 82 |

+

2. Ensure that your system has [NVIDIA Docker support](https://github.com/NVIDIA/nvidia-docker) if you are using GPUs.

|

| 83 |

+

|

| 84 |

+

### Run using docker Hub image

|

| 85 |

+

|

| 86 |

+

```bash

|

| 87 |

+

# For GPU

|

| 88 |

+

docker run -t -p 7860:7860 --gpus all baydarov/draggan

|

| 89 |

+

```

|

| 90 |

+

|

| 91 |

+

```bash

|

| 92 |

+

# For CPU only (not recommended)

|

| 93 |

+

docker run -t -p 7860:7860 baydarov/draggan --device cpu

|

| 94 |

+

```

|

| 95 |

+

|

| 96 |

+

### Step-by-step Guide with building image locally

|

| 97 |

+

|

| 98 |

+

1. Clone the DragGAN repository and build the Docker image:

|

| 99 |

+

|

| 100 |

+

```bash

|

| 101 |

+

git clone https://github.com/Zeqiang-Lai/DragGAN.git # clone repo

|

| 102 |

+

cd DragGAN # change into the repo directory

|

| 103 |

+

docker build -t draggan . # build image

|

| 104 |

+

```

|

| 105 |

+

|

| 106 |

+

2. Run the DragGAN Docker container:

|

| 107 |

+

|

| 108 |

+

```bash

|

| 109 |

+

# For GPU

|

| 110 |

+

docker run -t -p 7860:7860 --gpus all draggan

|

| 111 |

+

```

|

| 112 |

+

|

| 113 |

+

```bash

|

| 114 |

+

# For CPU (not recommended)

|

| 115 |

+

docker run -t -p 7860:7860 draggan --device cpu

|

| 116 |

+

```

|

| 117 |

+

|

| 118 |

+

3. The DragGAN Web UI will be accessible once you see the following output in your console:

|

| 119 |

+

|

| 120 |

+

```

|

| 121 |

+

...

|

| 122 |

+

Running on local URL: http://0.0.0.0:7860

|

| 123 |

+

...

|

| 124 |

+

```

|

| 125 |

+

|

| 126 |

+

Visit [http://localhost:7860](http://localhost:7860/) to access the Web UI.

|

| 127 |

+

|

| 128 |

+

That's it! You're now running DragGAN in a Docker container.

|

README.md

ADDED

|

@@ -0,0 +1,91 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# DragGAN

|

| 2 |

+

[](https://pypi.org/project/draggan/)

|

| 3 |

+

[](#running-locally)

|

| 4 |

+

|

| 5 |

+

:boom: [`Colab Demo`](https://colab.research.google.com/github/Zeqiang-Lai/DragGAN/blob/master/colab.ipynb) [`Awesome-DragGAN`](https://github.com/OpenGVLab/Awesome-DragGAN) [`InternGPT Demo`](https://github.com/OpenGVLab/InternGPT) [`Local Deployment`](#running-locally)

|

| 6 |

+

|

| 7 |

+

> **Note for Colab, remember to select a GPU via `Runtime/Change runtime type` (`代码执行程序/更改运行时类型`).**

|

| 8 |

+

>

|

| 9 |

+

> If you want to upload custom image, please install 1.1.0 via `pip install draggan==1.1.0`.

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

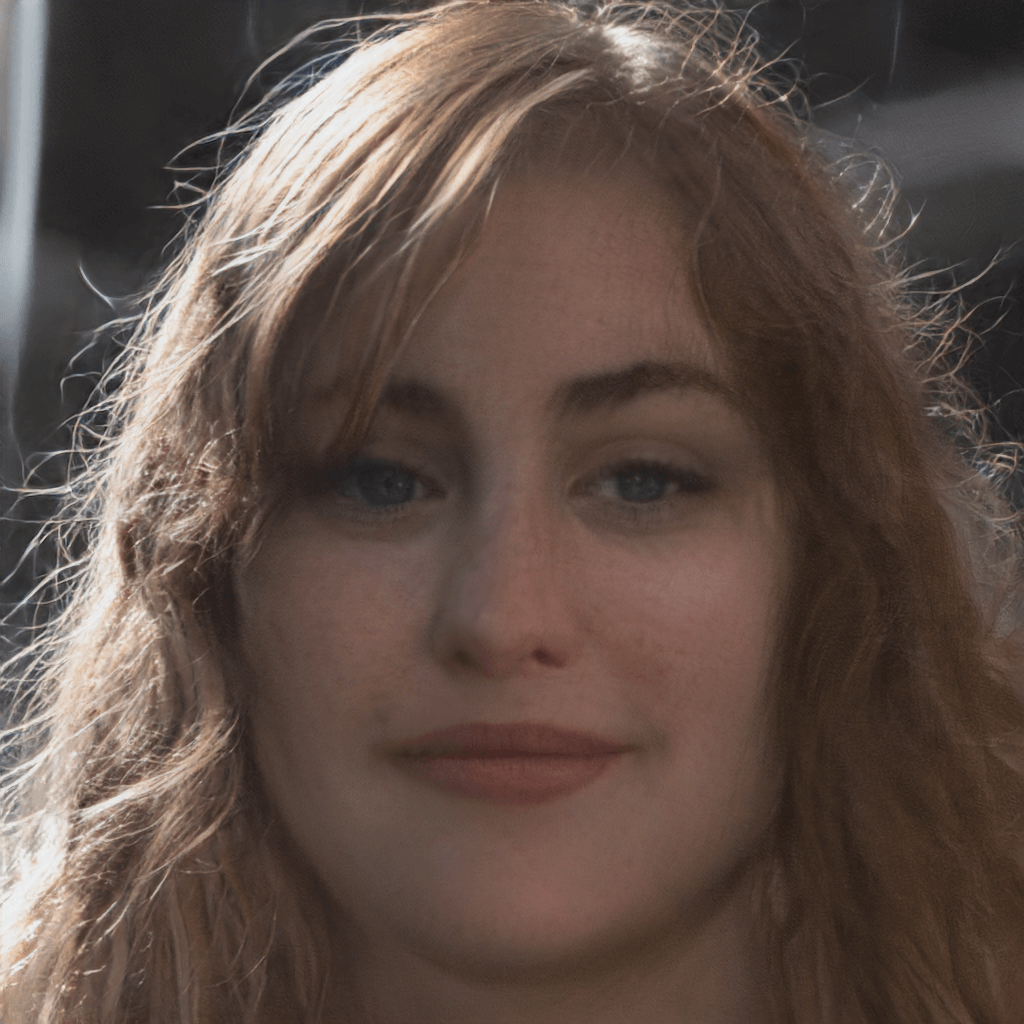

Unofficial implementation of [Drag Your GAN: Interactive Point-based Manipulation on the Generative Image Manifold](https://vcai.mpi-inf.mpg.de/projects/DragGAN/)

|

| 13 |

+

|

| 14 |

+

<p float="left">

|

| 15 |

+

<img src="assets/mouse.gif" width="200" />

|

| 16 |

+

<img src="assets/nose.gif" width="200" />

|

| 17 |

+

<img src="assets/cat.gif" width="200" />

|

| 18 |

+

<img src="assets/horse.gif" width="200" />

|

| 19 |

+

</p>

|

| 20 |

+

|

| 21 |

+

## How it Work ?

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

Here is a simple tutorial video showing how to use our implementation.

|

| 25 |

+

|

| 26 |

+

https://github.com/Zeqiang-Lai/DragGAN/assets/26198430/f1516101-5667-4f73-9330-57fc45754283

|

| 27 |

+

|

| 28 |

+

Check out the original [paper](https://vcai.mpi-inf.mpg.de/projects/DragGAN/) for the backend algorithm and math.

|

| 29 |

+

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

## News

|

| 33 |

+

|

| 34 |

+

:star2: **What's New**

|

| 35 |

+

|

| 36 |

+

- [2023/6/25] Relase version 1.1.1, it includes a major bug fix and speed improvement.

|

| 37 |

+

- [2023/6/25] [Official Code](https://github.com/XingangPan/DragGAN) is released, check it out.

|

| 38 |

+

- [2023/5/29] A new version is in beta, install via `pip install draggan==1.1.0b2`, includes speed improvement and more models.

|

| 39 |

+

- [2023/5/25] DragGAN is on PyPI, simple install via `pip install draggan`. Also addressed the common CUDA problems https://github.com/Zeqiang-Lai/DragGAN/issues/38 https://github.com/Zeqiang-Lai/DragGAN/issues/12

|

| 40 |

+

- [2023/5/25] We now support StyleGAN2-ada with much higher quality and more types of images. Try it by selecting models started with "ada".

|

| 41 |

+

- [2023/5/24] An out-of-box online demo is integrated in [InternGPT](https://github.com/OpenGVLab/InternGPT) - a super cool pointing-language-driven visual interactive system. Enjoy for free.:lollipop:

|

| 42 |

+

- [2023/5/24] Custom Image with GAN inversion is supported, but it is possible that your custom images are distorted due to the limitation of GAN inversion. Besides, it is also possible the manipulations fail due to the limitation of our implementation.

|

| 43 |

+

|

| 44 |

+

:star2: **Changelog**

|

| 45 |

+

|

| 46 |

+

- [x] Add a docker image, thanks [@egbaydarov](https://github.com/egbaydarov).

|

| 47 |

+

- [ ] PTI GAN inversion https://github.com/Zeqiang-Lai/DragGAN/issues/71#issuecomment-1573461314

|

| 48 |

+

- [x] Tweak performance, See [v2](https://github.com/Zeqiang-Lai/DragGAN/tree/v2).

|

| 49 |

+

- [x] Improving installation experience, DragGAN is now on [PyPI](https://pypi.org/project/draggan).

|

| 50 |

+

- [x] Automatically determining the number of iterations, See [v2](https://github.com/Zeqiang-Lai/DragGAN/tree/v2).

|

| 51 |

+

- [ ] Allow to save video without point annotations, custom image size.

|

| 52 |

+

- [x] Support StyleGAN2-ada.

|

| 53 |

+

- [x] Integrate into [InternGPT](https://github.com/OpenGVLab/InternGPT)

|

| 54 |

+

- [x] Custom Image with GAN inversion.

|

| 55 |

+

- [x] Download generated image and generation trajectory.

|

| 56 |

+

- [x] Controlling generation process with GUI.

|

| 57 |

+

- [x] Automatically download stylegan2 checkpoint.

|

| 58 |

+

- [x] Support movable region, multiple handle points.

|

| 59 |

+

- [x] Gradio and Colab Demo.

|

| 60 |

+

|

| 61 |

+

> This project is now a sub-project of [InternGPT](https://github.com/OpenGVLab/InternGPT) for interactive image editing. Future updates of more cool tools beyond DragGAN would be added in [InternGPT](https://github.com/OpenGVLab/InternGPT).

|

| 62 |

+

|

| 63 |

+

## Running Locally

|

| 64 |

+

|

| 65 |

+

Please refer to [INSTALL.md](INSTALL.md).

|

| 66 |

+

|

| 67 |

+

|

| 68 |

+

## Citation

|

| 69 |

+

|

| 70 |

+

```bibtex

|

| 71 |

+

@inproceedings{pan2023draggan,

|

| 72 |

+

title={Drag Your GAN: Interactive Point-based Manipulation on the Generative Image Manifold},

|

| 73 |

+

author={Pan, Xingang and Tewari, Ayush, and Leimk{\"u}hler, Thomas and Liu, Lingjie and Meka, Abhimitra and Theobalt, Christian},

|

| 74 |

+

booktitle = {ACM SIGGRAPH 2023 Conference Proceedings},

|

| 75 |

+

year={2023}

|

| 76 |

+

}

|

| 77 |

+

```

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

## Acknowledgement

|

| 81 |

+

|

| 82 |

+

[Official DragGAN](https://github.com/XingangPan/DragGAN)   [DragGAN-Streamlit](https://github.com/skimai/DragGAN)   [StyleGAN2](https://github.com/NVlabs/stylegan2)   [StyleGAN2-pytorch](https://github.com/rosinality/stylegan2-pytorch)   [StyleGAN2-Ada](https://github.com/NVlabs/stylegan2-ada-pytorch)   [StyleGAN-Human](https://github.com/stylegan-human/StyleGAN-Human)   [Self-Distilled-StyleGAN](https://github.com/self-distilled-stylegan/self-distilled-internet-photos)

|

| 83 |

+

|

| 84 |

+

Welcome to discuss with us and continuously improve the user experience of DragGAN.

|

| 85 |

+

Reach us with this WeChat QR Code.

|

| 86 |

+

|

| 87 |

+

|

| 88 |

+

<p align="left"><img width="300" alt="image" src="https://github.com/OpenGVLab/DragGAN/assets/26198430/885cb87a-4acc-490d-8a45-96f3ab870611"><img width="300" alt="image" src="https://github.com/OpenGVLab/DragGAN/assets/26198430/e3f0807f-956a-474e-8fd2-1f7c22d73997"></p>

|

| 89 |

+

|

| 90 |

+

|

| 91 |

+

|

assets/cat.gif

ADDED

|

assets/custom/face1.png

ADDED

|

assets/custom/face2.png

ADDED

|

assets/demo.png

ADDED

|

assets/horse.gif

ADDED

|

Git LFS Details

|

assets/mouse.gif

ADDED

|

assets/nose.gif

ADDED

|

Git LFS Details

|

assets/paper.png

ADDED

|

colab.ipynb

ADDED

|

@@ -0,0 +1,76 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"cells": [

|

| 3 |

+

{

|

| 4 |

+

"attachments": {},

|

| 5 |

+

"cell_type": "markdown",

|

| 6 |

+

"metadata": {},

|

| 7 |

+

"source": [

|

| 8 |

+

"# DragGAN Colab Demo\n",

|

| 9 |

+

"\n",

|

| 10 |

+

"Wild implementation of [Drag Your GAN: Interactive Point-based Manipulation on the Generative Image Manifold](https://vcai.mpi-inf.mpg.de/projects/DragGAN/)\n",

|

| 11 |

+

"\n",

|

| 12 |

+

"**Note for Colab, remember to select a GPU via `Runtime/Change runtime type` (`代码执行程序/更改运行时类型`).**"

|

| 13 |

+

]

|

| 14 |

+

},

|

| 15 |

+

{

|

| 16 |

+

"cell_type": "code",

|

| 17 |

+

"execution_count": null,

|

| 18 |

+

"metadata": {},

|

| 19 |

+

"outputs": [],

|

| 20 |

+

"source": [

|

| 21 |

+

"#@title Installation\n",

|

| 22 |

+

"!git clone https://github.com/Zeqiang-Lai/DragGAN.git\n",

|

| 23 |

+

"\n",

|

| 24 |

+

"import sys\n",

|

| 25 |

+

"sys.path.append(\".\")\n",

|

| 26 |

+

"sys.path.append('./DragGAN')\n",

|

| 27 |

+

"\n",

|

| 28 |

+

"!pip install -r DragGAN/requirements.txt\n",

|

| 29 |

+

"\n",

|

| 30 |

+

"from gradio_app import main"

|

| 31 |

+

]

|

| 32 |

+

},

|

| 33 |

+

{

|

| 34 |

+

"attachments": {},

|

| 35 |

+

"cell_type": "markdown",

|

| 36 |

+

"metadata": {},

|

| 37 |

+

"source": [

|

| 38 |

+

"**If you have problem in the following demo, such as the incorrected image, or facing errors. Please try to run the following block again.**\n",

|

| 39 |

+

"\n",

|

| 40 |

+

"If the errors still exist, you could fire an issue on [Github](https://github.com/Zeqiang-Lai/DragGAN)."

|

| 41 |

+

]

|

| 42 |

+

},

|

| 43 |

+

{

|

| 44 |

+

"cell_type": "code",

|

| 45 |

+

"execution_count": null,

|

| 46 |

+

"metadata": {},

|

| 47 |

+

"outputs": [],

|

| 48 |

+

"source": [

|

| 49 |

+

"demo = main()\n",

|

| 50 |

+

"demo.queue(concurrency_count=1, max_size=20).launch()"

|

| 51 |

+

]

|

| 52 |

+

}

|

| 53 |

+

],

|

| 54 |

+

"metadata": {

|

| 55 |

+

"kernelspec": {

|

| 56 |

+

"display_name": "torch1.10",

|

| 57 |

+

"language": "python",

|

| 58 |

+

"name": "python3"

|

| 59 |

+

},

|

| 60 |

+

"language_info": {

|

| 61 |

+

"codemirror_mode": {

|

| 62 |

+

"name": "ipython",

|

| 63 |

+

"version": 3

|

| 64 |

+

},

|

| 65 |

+

"file_extension": ".py",

|

| 66 |

+

"mimetype": "text/x-python",

|

| 67 |

+

"name": "python",

|

| 68 |

+

"nbconvert_exporter": "python",

|

| 69 |

+

"pygments_lexer": "ipython3",

|

| 70 |

+

"version": "3.7.12"

|

| 71 |

+

},

|

| 72 |

+

"orig_nbformat": 4

|

| 73 |

+

},

|

| 74 |

+

"nbformat": 4,

|

| 75 |

+

"nbformat_minor": 2

|

| 76 |

+

}

|

draggan/__init__.py

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from .utils import BASE_DIR

|

| 2 |

+

|

| 3 |

+

home = BASE_DIR

|

draggan/deprecated/__init__.py

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from .utils import BASE_DIR

|

| 2 |

+

|

| 3 |

+

home = BASE_DIR

|

draggan/deprecated/api.py

ADDED

|

@@ -0,0 +1,244 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import copy

|

| 2 |

+

import random

|

| 3 |

+

|

| 4 |

+

import torch

|

| 5 |

+

import torch.nn.functional as FF

|

| 6 |

+

import torch.optim

|

| 7 |

+

|

| 8 |

+

from . import utils

|

| 9 |

+

from .stylegan2.model import Generator

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

class CustomGenerator(Generator):

|

| 13 |

+

def prepare(

|

| 14 |

+

self,

|

| 15 |

+

styles,

|

| 16 |

+

inject_index=None,

|

| 17 |

+

truncation=1,

|

| 18 |

+

truncation_latent=None,

|

| 19 |

+

input_is_latent=False,

|

| 20 |

+

noise=None,

|

| 21 |

+

randomize_noise=True,

|

| 22 |

+

):

|

| 23 |

+

if not input_is_latent:

|

| 24 |

+

styles = [self.style(s) for s in styles]

|

| 25 |

+

|

| 26 |

+

if noise is None:

|

| 27 |

+

if randomize_noise:

|

| 28 |

+

noise = [None] * self.num_layers

|

| 29 |

+

else:

|

| 30 |

+

noise = [

|

| 31 |

+

getattr(self.noises, f"noise_{i}") for i in range(self.num_layers)

|

| 32 |

+

]

|

| 33 |

+

|

| 34 |

+

if truncation < 1:

|

| 35 |

+

style_t = []

|

| 36 |

+

|

| 37 |

+

for style in styles:

|

| 38 |

+

style_t.append(

|

| 39 |

+

truncation_latent + truncation * (style - truncation_latent)

|

| 40 |

+

)

|

| 41 |

+

|

| 42 |

+

styles = style_t

|

| 43 |

+

|

| 44 |

+

if len(styles) < 2:

|

| 45 |

+

inject_index = self.n_latent

|

| 46 |

+

|

| 47 |

+

if styles[0].ndim < 3:

|

| 48 |

+

latent = styles[0].unsqueeze(1).repeat(1, inject_index, 1)

|

| 49 |

+

|

| 50 |

+

else:

|

| 51 |

+

latent = styles[0]

|

| 52 |

+

|

| 53 |

+

else:

|

| 54 |

+

if inject_index is None:

|

| 55 |

+

inject_index = random.randint(1, self.n_latent - 1)

|

| 56 |

+

|

| 57 |

+

latent = styles[0].unsqueeze(1).repeat(1, inject_index, 1)

|

| 58 |

+

latent2 = styles[1].unsqueeze(1).repeat(1, self.n_latent - inject_index, 1)

|

| 59 |

+

|

| 60 |

+

latent = torch.cat([latent, latent2], 1)

|

| 61 |

+

|

| 62 |

+

return latent, noise

|

| 63 |

+

|

| 64 |

+

def generate(

|

| 65 |

+

self,

|

| 66 |

+

latent,

|

| 67 |

+

noise,

|

| 68 |

+

):

|

| 69 |

+

out = self.input(latent)

|

| 70 |

+

out = self.conv1(out, latent[:, 0], noise=noise[0])

|

| 71 |

+

|

| 72 |

+

skip = self.to_rgb1(out, latent[:, 1])

|

| 73 |

+

i = 1

|

| 74 |

+

for conv1, conv2, noise1, noise2, to_rgb in zip(

|

| 75 |

+

self.convs[::2], self.convs[1::2], noise[1::2], noise[2::2], self.to_rgbs

|

| 76 |

+

):

|

| 77 |

+

out = conv1(out, latent[:, i], noise=noise1)

|

| 78 |

+

out = conv2(out, latent[:, i + 1], noise=noise2)

|

| 79 |

+

skip = to_rgb(out, latent[:, i + 2], skip)

|

| 80 |

+

if out.shape[-1] == 256: F = out

|

| 81 |

+

i += 2

|

| 82 |

+

|

| 83 |

+

image = skip

|

| 84 |

+

F = FF.interpolate(F, image.shape[-2:], mode='bilinear')

|

| 85 |

+

return image, F

|

| 86 |

+

|

| 87 |

+

|

| 88 |

+

def stylegan2(

|

| 89 |

+

size=1024,

|

| 90 |

+

channel_multiplier=2,

|

| 91 |

+

latent=512,

|

| 92 |

+

n_mlp=8,

|

| 93 |

+

ckpt='stylegan2-ffhq-config-f.pt'

|

| 94 |

+

):

|

| 95 |

+

g_ema = CustomGenerator(size, latent, n_mlp, channel_multiplier=channel_multiplier, human='human' in ckpt)

|

| 96 |

+

checkpoint = torch.load(utils.get_path(ckpt))

|

| 97 |

+

g_ema.load_state_dict(checkpoint["g_ema"], strict=False)

|

| 98 |

+

g_ema.requires_grad_(False)

|

| 99 |

+

g_ema.eval()

|

| 100 |

+

return g_ema

|

| 101 |

+

|

| 102 |

+

|

| 103 |

+

def drag_gan(

|

| 104 |

+

g_ema,

|

| 105 |

+

latent: torch.Tensor,

|

| 106 |

+

noise,

|

| 107 |

+

F,

|

| 108 |

+

handle_points,

|

| 109 |

+

target_points,

|

| 110 |

+

mask,

|

| 111 |

+

max_iters=1000,

|

| 112 |

+

r1=3,

|

| 113 |

+

r2=12,

|

| 114 |

+

lam=20,

|

| 115 |

+

d=2,

|

| 116 |

+

lr=2e-3,

|

| 117 |

+

):

|

| 118 |

+

handle_points0 = copy.deepcopy(handle_points)

|

| 119 |

+

handle_points = torch.stack(handle_points)

|

| 120 |

+

handle_points0 = torch.stack(handle_points0)

|

| 121 |

+

target_points = torch.stack(target_points)

|

| 122 |

+

|

| 123 |

+

F0 = F.detach().clone()

|

| 124 |

+

device = latent.device

|

| 125 |

+

|

| 126 |

+

latent_trainable = latent[:, :6, :].detach().clone().requires_grad_(True)

|

| 127 |

+

latent_untrainable = latent[:, 6:, :].detach().clone().requires_grad_(False)

|

| 128 |

+

optimizer = torch.optim.Adam([latent_trainable], lr=lr)

|

| 129 |

+

for _ in range(max_iters):

|

| 130 |

+

if torch.allclose(handle_points, target_points, atol=d):

|

| 131 |

+

break

|

| 132 |

+

|

| 133 |

+

optimizer.zero_grad()

|

| 134 |

+

latent = torch.cat([latent_trainable, latent_untrainable], dim=1)

|

| 135 |

+

sample2, F2 = g_ema.generate(latent, noise)

|

| 136 |

+

|

| 137 |

+

# motion supervision

|

| 138 |

+

loss = motion_supervison(handle_points, target_points, F2, r1, device)

|

| 139 |

+

|

| 140 |

+

if mask is not None:

|

| 141 |

+

loss += ((F2 - F0) * (1 - mask)).abs().mean() * lam

|

| 142 |

+

|

| 143 |

+

loss.backward()

|

| 144 |

+

optimizer.step()

|

| 145 |

+

|

| 146 |

+

with torch.no_grad():

|

| 147 |

+

latent = torch.cat([latent_trainable, latent_untrainable], dim=1)

|

| 148 |

+

sample2, F2 = g_ema.generate(latent, noise)

|

| 149 |

+

handle_points = point_tracking(F2, F0, handle_points, handle_points0, r2, device)

|

| 150 |

+

|

| 151 |

+

F = F2.detach().clone()

|

| 152 |

+

# if iter % 1 == 0:

|

| 153 |

+

# print(iter, loss.item(), handle_points, target_points)

|

| 154 |

+

|

| 155 |

+

yield sample2, latent, F2, handle_points

|

| 156 |

+

|

| 157 |

+

|

| 158 |

+

def motion_supervison(handle_points, target_points, F2, r1, device):

|

| 159 |

+

loss = 0

|

| 160 |

+

n = len(handle_points)

|

| 161 |

+

for i in range(n):

|

| 162 |

+

target2handle = target_points[i] - handle_points[i]

|

| 163 |

+

d_i = target2handle / (torch.norm(target2handle) + 1e-7)

|

| 164 |

+

if torch.norm(d_i) > torch.norm(target2handle):

|

| 165 |

+

d_i = target2handle

|

| 166 |

+

|

| 167 |

+

mask = utils.create_circular_mask(

|

| 168 |

+

F2.shape[2], F2.shape[3], center=handle_points[i].tolist(), radius=r1

|

| 169 |

+

).to(device)

|

| 170 |

+

|

| 171 |

+

coordinates = torch.nonzero(mask).float() # shape [num_points, 2]

|

| 172 |

+

|

| 173 |

+

# Shift the coordinates in the direction d_i

|

| 174 |

+

shifted_coordinates = coordinates + d_i[None]

|

| 175 |

+

|

| 176 |

+

h, w = F2.shape[2], F2.shape[3]

|

| 177 |

+

|

| 178 |

+

# Extract features in the mask region and compute the loss

|

| 179 |

+

F_qi = F2[:, :, mask] # shape: [C, H*W]

|

| 180 |

+

|

| 181 |

+

# Sample shifted patch from F

|

| 182 |

+

normalized_shifted_coordinates = shifted_coordinates.clone()

|

| 183 |

+

normalized_shifted_coordinates[:, 0] = (

|

| 184 |

+

2.0 * shifted_coordinates[:, 0] / (h - 1)

|

| 185 |

+

) - 1 # for height

|

| 186 |

+

normalized_shifted_coordinates[:, 1] = (

|

| 187 |

+

2.0 * shifted_coordinates[:, 1] / (w - 1)

|

| 188 |

+

) - 1 # for width

|

| 189 |

+

# Add extra dimensions for batch and channels (required by grid_sample)

|

| 190 |

+

normalized_shifted_coordinates = normalized_shifted_coordinates.unsqueeze(

|

| 191 |

+

0

|

| 192 |

+

).unsqueeze(

|

| 193 |

+

0

|

| 194 |

+

) # shape [1, 1, num_points, 2]

|

| 195 |

+

normalized_shifted_coordinates = normalized_shifted_coordinates.flip(

|

| 196 |

+

-1

|

| 197 |

+

) # grid_sample expects [x, y] instead of [y, x]

|

| 198 |

+

normalized_shifted_coordinates = normalized_shifted_coordinates.clamp(-1, 1)

|

| 199 |

+

|

| 200 |

+

# Use grid_sample to interpolate the feature map F at the shifted patch coordinates

|

| 201 |

+

F_qi_plus_di = torch.nn.functional.grid_sample(

|

| 202 |

+

F2, normalized_shifted_coordinates, mode="bilinear", align_corners=True

|

| 203 |

+

)

|

| 204 |

+

# Output has shape [1, C, 1, num_points] so squeeze it

|

| 205 |

+

F_qi_plus_di = F_qi_plus_di.squeeze(2) # shape [1, C, num_points]

|

| 206 |

+

|

| 207 |

+

loss += torch.nn.functional.l1_loss(F_qi.detach(), F_qi_plus_di)

|

| 208 |

+

return loss

|

| 209 |

+

|

| 210 |

+

|

| 211 |

+

def point_tracking(

|

| 212 |

+

F: torch.Tensor,

|

| 213 |

+

F0: torch.Tensor,

|

| 214 |

+

handle_points: torch.Tensor,

|

| 215 |

+

handle_points0: torch.Tensor,

|

| 216 |

+

r2: int = 3,

|

| 217 |

+

device: torch.device = torch.device("cuda"),

|

| 218 |

+

) -> torch.Tensor:

|

| 219 |

+

|

| 220 |

+

n = handle_points.shape[0] # Number of handle points

|

| 221 |

+

new_handle_points = torch.zeros_like(handle_points)

|

| 222 |

+

|

| 223 |

+

for i in range(n):

|

| 224 |

+

# Compute the patch around the handle point

|

| 225 |

+

patch = utils.create_square_mask(

|

| 226 |

+

F.shape[2], F.shape[3], center=handle_points[i].tolist(), radius=r2

|

| 227 |

+

).to(device)

|

| 228 |

+

|

| 229 |

+

# Find indices where the patch is True

|

| 230 |

+

patch_coordinates = torch.nonzero(patch) # shape [num_points, 2]

|

| 231 |

+

|

| 232 |

+

# Extract features in the patch

|

| 233 |

+

F_qi = F[:, :, patch_coordinates[:, 0], patch_coordinates[:, 1]]

|

| 234 |

+

# Extract feature of the initial handle point

|

| 235 |

+

f_i = F0[:, :, handle_points0[i][0].long(), handle_points0[i][1].long()]

|

| 236 |

+

|

| 237 |

+

# Compute the L1 distance between the patch features and the initial handle point feature

|

| 238 |

+

distances = torch.norm(F_qi - f_i[:, :, None], p=1, dim=1)

|

| 239 |

+

|

| 240 |

+

# Find the new handle point as the one with minimum distance

|

| 241 |

+

min_index = torch.argmin(distances)

|

| 242 |

+

new_handle_points[i] = patch_coordinates[min_index]

|

| 243 |

+

|

| 244 |

+

return new_handle_points

|

draggan/deprecated/stylegan2/__init__.py

ADDED

|

File without changes

|

draggan/deprecated/stylegan2/inversion.py

ADDED

|

@@ -0,0 +1,209 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import math

|

| 2 |

+

import os

|

| 3 |

+

|

| 4 |

+

import torch

|

| 5 |

+

from torch import optim

|

| 6 |

+

from torch.nn import functional as FF

|

| 7 |

+

from torchvision import transforms

|

| 8 |

+

from PIL import Image

|

| 9 |

+

from tqdm import tqdm

|

| 10 |

+

import dataclasses

|

| 11 |

+

|

| 12 |

+

from .lpips import util

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

def noise_regularize(noises):

|

| 16 |

+

loss = 0

|

| 17 |

+

|

| 18 |

+

for noise in noises:

|

| 19 |

+

size = noise.shape[2]

|

| 20 |

+

|

| 21 |

+

while True:

|

| 22 |

+

loss = (

|

| 23 |

+

loss

|

| 24 |

+

+ (noise * torch.roll(noise, shifts=1, dims=3)).mean().pow(2)

|

| 25 |

+

+ (noise * torch.roll(noise, shifts=1, dims=2)).mean().pow(2)

|

| 26 |

+

)

|

| 27 |

+

|

| 28 |

+

if size <= 8:

|

| 29 |

+

break

|

| 30 |

+

|

| 31 |

+

noise = noise.reshape([-1, 1, size // 2, 2, size // 2, 2])

|

| 32 |

+

noise = noise.mean([3, 5])

|

| 33 |

+

size //= 2

|

| 34 |

+

|

| 35 |

+

return loss

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

def noise_normalize_(noises):

|

| 39 |

+

for noise in noises:

|

| 40 |

+

mean = noise.mean()

|

| 41 |

+

std = noise.std()

|

| 42 |

+

|

| 43 |

+

noise.data.add_(-mean).div_(std)

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

def get_lr(t, initial_lr, rampdown=0.25, rampup=0.05):

|

| 47 |

+

lr_ramp = min(1, (1 - t) / rampdown)

|

| 48 |

+

lr_ramp = 0.5 - 0.5 * math.cos(lr_ramp * math.pi)

|

| 49 |

+

lr_ramp = lr_ramp * min(1, t / rampup)

|

| 50 |

+

|

| 51 |

+

return initial_lr * lr_ramp

|

| 52 |

+

|

| 53 |

+

|

| 54 |

+

def latent_noise(latent, strength):

|

| 55 |

+

noise = torch.randn_like(latent) * strength

|

| 56 |

+

|

| 57 |

+

return latent + noise

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

def make_image(tensor):

|

| 61 |

+

return (

|

| 62 |

+

tensor.detach()

|

| 63 |

+

.clamp_(min=-1, max=1)

|

| 64 |

+

.add(1)

|

| 65 |

+

.div_(2)

|

| 66 |

+

.mul(255)

|

| 67 |

+

.type(torch.uint8)

|

| 68 |

+

.permute(0, 2, 3, 1)

|

| 69 |

+

.to("cpu")

|

| 70 |

+

.numpy()

|

| 71 |

+

)

|

| 72 |

+

|

| 73 |

+

|

| 74 |

+

@dataclasses.dataclass

|

| 75 |

+

class InverseConfig:

|

| 76 |

+

lr_warmup = 0.05

|

| 77 |

+

lr_decay = 0.25

|

| 78 |

+

lr = 0.1

|

| 79 |

+

noise = 0.05

|

| 80 |

+

noise_decay = 0.75

|

| 81 |

+

step = 1000

|

| 82 |

+

noise_regularize = 1e5

|

| 83 |

+

mse = 0

|

| 84 |

+

w_plus = False,

|

| 85 |

+

|

| 86 |

+

|

| 87 |

+

def inverse_image(

|

| 88 |

+

g_ema,

|

| 89 |

+

image,

|

| 90 |

+

image_size=256,

|

| 91 |

+

config=InverseConfig()

|

| 92 |

+

):

|

| 93 |

+

device = "cuda"

|

| 94 |